Novel optics for ultrafast camera creates new imaging methods

MIT develops "time-folded" optics for ultrafast cameras: captures image at multiple depths simultaneously in one shutter click.

15 August 2018 Research & Development

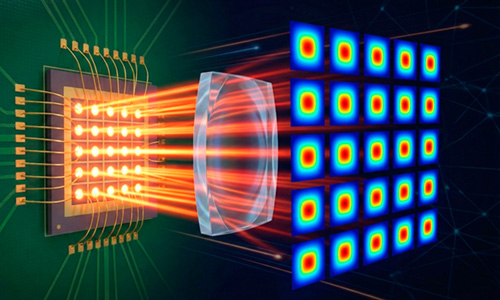

Researchers at MIT, Cambridge, Ma, US, have developed novel photographic optics that capture images based on the timing of reflecting light inside the optics, instead of the traditional lens-element approach. The new design promises new capabilities for time- or depth-sensitive cameras, which are not possible with conventional optics.

Researchers at MIT, Cambridge, Ma, US, have developed novel photographic optics that capture images based on the timing of reflecting light inside the optics, instead of the traditional lens-element approach. The new design promises new capabilities for time- or depth-sensitive cameras, which are not possible with conventional optics.

The new optics are designed for an ultrafast sensor, known as a streak camera, which resolves images from ultrashort pulses. Streak cameras can make a trillion-frame-per-second video, scan through closed books, and provide a depth map of a 3-D scene, for example. However such cameras have, so far, relied on conventional optics, which have various design constraints.

MIT Media Lab researchers have developed a technique that makes a light signal reflect back and forth off carefully positioned mirrors inside the lens system. A fast imaging sensor captures a separate image at each reflection time. The result is a sequence of images, each corresponding to a different point in time, and to a different distance from the lens. The researchers have called the technique time-folded optics. The work is described in Nature Photonics.

How it works

Barmak Heshmat, first author on the paper, commented, "When you have a fast sensor camera, to resolve light passing through optics, you can trade time for space. That is the core concept of time folding. You look at the optic at the right time, and that time is equal to looking at it in the right distance."

The MIT architecture includes a set of semireflective parallel mirrors that reduce, or fold, the focal length every time the light reflects between the mirrors. By placing the set of mirrors between the lens and sensor, the researchers condensed the distance of optics arrangement by an order of magnitude while still capturing an image of the scene.

The researchers' system consists of a component that projects a femtosecond laser pulse into a scene to illuminate target objects. Traditional photography optics change the shape of the light signal as it travels through the curved glasses. This shape change creates an image on the sensor.

The researchers' system consists of a component that projects a femtosecond laser pulse into a scene to illuminate target objects. Traditional photography optics change the shape of the light signal as it travels through the curved glasses. This shape change creates an image on the sensor.

Instead of heading right to the sensor, the signal first bounces back and forth between mirrors precisely arranged to trap and reflect light. Each one of these reflections is called a "round trip." At each round trip, some light is captured by the sensor programed to image at a specific time interval, for example, a 1ns snapshot every 30ns.

By placing the sensor at a precise focal point, determined by total round trips, the camera can capture a sharp final image, as well as different stages of the light signal, each coded at a different time, as the signal changes shape to produce the image. Heshmat noted, "The first few shots will be blurry, but after several round trips the target object will come into focus".

This new architecture could be useful, Heshmat says, in designing more compact telescope lenses that capture, say, ultrafast signals from space, or for designing smaller and lighter lenses for satellites to image the surface of the ground.

Multizoom and multicolor

The researchers have imaged two patterns spaced about 500mm apart from each other, but each within line of sight of the camera. An "X" pattern was 550mm from the lens, and a "II" pattern was 40mm from the lens. By precisely rearranging the optics, in part, by placing the lens in between the two mirrors, they shaped the light in a way that each round trip created a new magnification in a single image acquisition.

The researchers then demonstrated an ultrafast multispectral camera. They designed two color-reflecting mirrors and a broadband mirror - one tuned to reflect one color, set closer to the lens, and one tuned to reflect a second color, set farther back from the lens. They imaged a mask with an "A" and "B," with the A illuminated the second color and the B illuminated the first color, both for a few tenths of a picosecond.

Heshmat commented that the work "opens doors for many different optics designs by tweaking the cavity spacing, or by using different types of cavities, sensors, and lenses. The core message is that when you have a camera that is fast, or has a depth sensor, you don't need to design optics the way you did for old cameras. You can do much more with the optics by looking at them at the right time."

This work "exploits the time dimension to achieve new functionalities in ultrafast cameras that utilize pulsed laser illumination. This opens up a new way to design imaging systems," said Bahram Jalali, director of the Photonics Laboratory and a professor of electrical and computer engineering at the University of California at Berkeley. "Ultrafast imaging makes it possible to see through diffusive media, such as tissue, and this work hold promise for improving medical imaging in particular for intraoperative microscopes."

Video

The following video from the MIT Media Lab's Camera Culture group explains the latest work:

AR Alliance grows as Meta launches prescription eyewear

April 01 2026

Monarch lands $55M for ‘quantum light engines’

April 01 2026

Lumentum to scale InP lasers at new US fab

April 01 2026